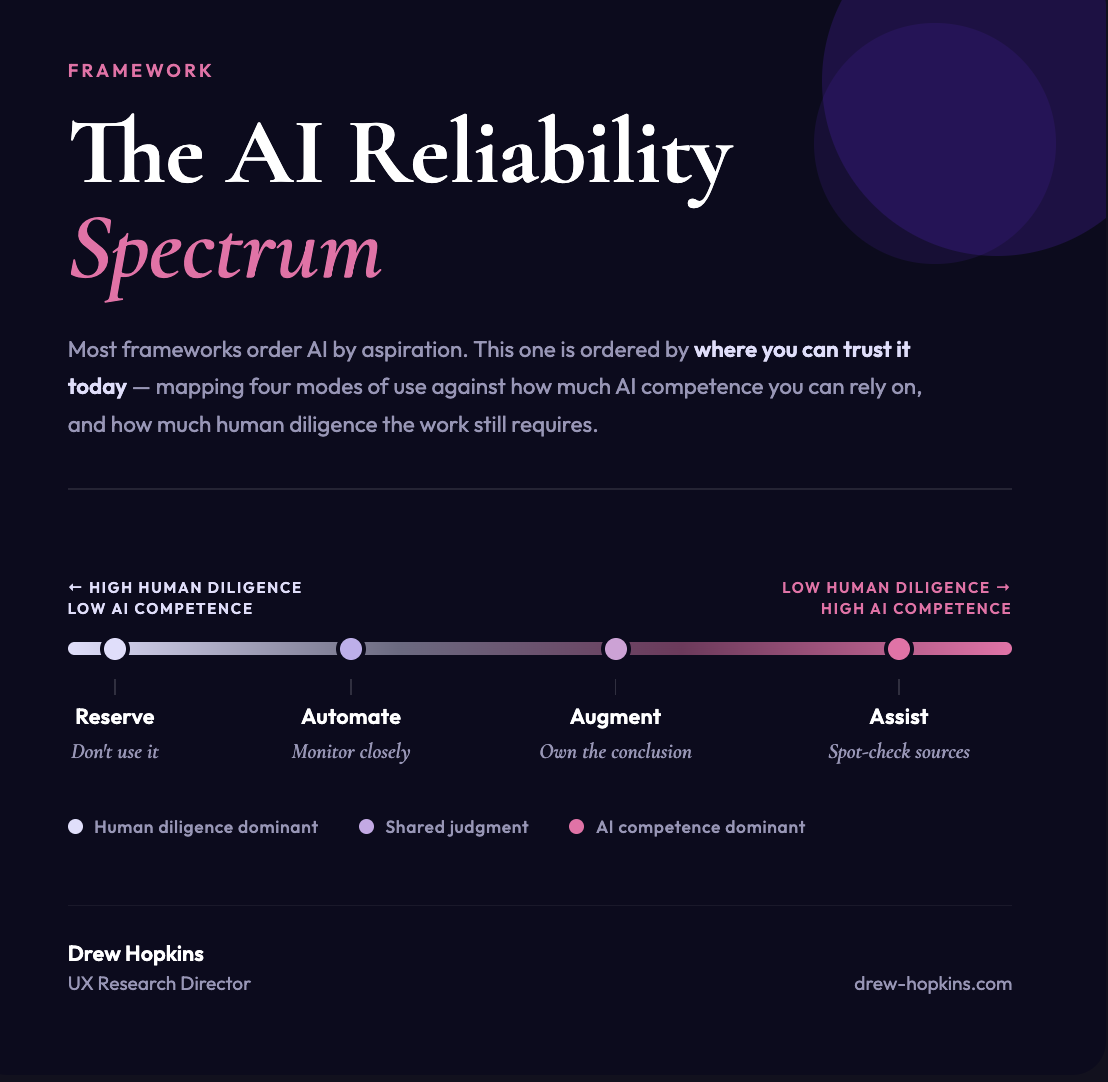

The AI Reliability Spectrum

In a previous post, I talked about how I feel there is a push-pull relationship with AI capabilities and trust, and the line we walk in exploring that ideal. (https://lnkd.in/ei8DP_S8)

I’ve been thinking more about that line. Could it look like a spectrum? One of AI competency and human diligence.

I’ve outlined the framework below… do you see truth to this or am I oversimplifying things? Are there any exceptions?

Co-writtenn with Claude Sonnet 4.6

Overall confidence: 83%

Assumptions and gaps:

Narrow/scoped automation reliability assumed to be high but highly dependent on implementation quality.

Reserve edges will continue to shift as AI quality improves.

Deloitte Insights, Agentic AI Strategy (2026): https://lnkd.in/ehBnZcaV

Insight Partners 3A Framework: https://lnkd.in/euiuERAv

SFIA AI Skills Framework: https://lnkd.in/e-BbGT22

Machine Learning Mastery, Agentic AI Trends 2026: https://lnkd.in/e6mdeaZX