Acting on AI Confidence

Have you been using AI in the shadows? I know I have been. It might make us prolific geniuses in the short-term, but wouldn’t your peers respect you more if you were upfront on your sources? Wouldn’t they like to know how trustworthy those sources are?

As researchers, we’re trained to question sources and separate out noise. In statistics, we emphasize confidence intervals because our inferences are only as good as their certainty.

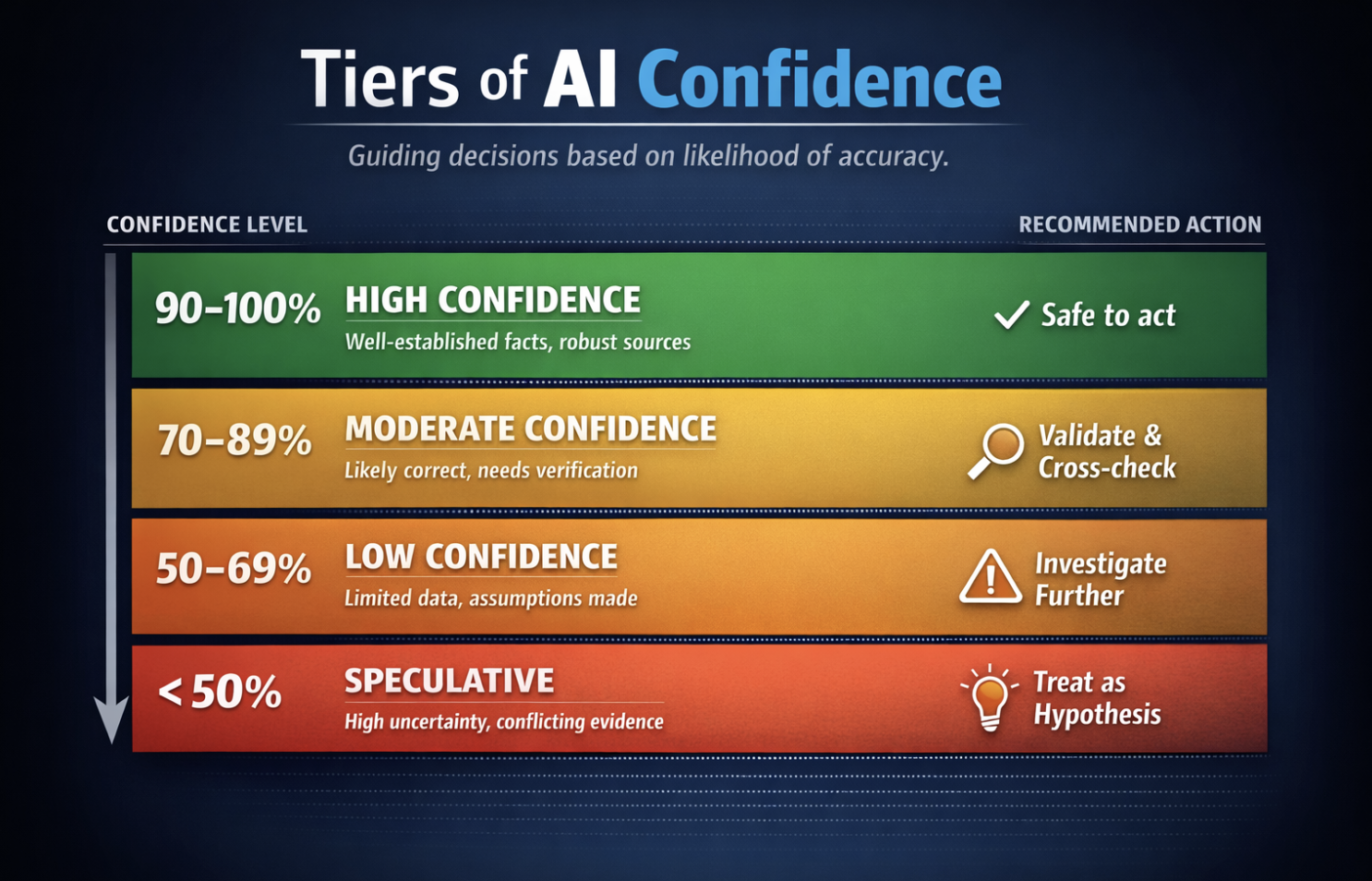

AI is far from perfect. We may need more conversations around AI confidence and structuring our decisions around confidence-levels. Teams could even benefit from a shared standard for AI-confidence in decision-making. It doesn’t have to be all-or-nothing either. Our actions and decisions could tier to the levels of confidence that support them.

90–100% (High confidence)

→ AI-output is grounded on well-established facts, widely documented

→ Safe to use in decision-making with light verification

70–89% (Moderate confidence)

→ AI-output is likely correct, but may involve some interpretation

→ Use with supporting validation and corroboration

50–69% (Low confidence)

→ AI-output has partial information, assumptions, or weak sourcing

→ Directional only — requires deeper investigation

<50% (Speculative)

→ AI-out has gaps, uncertainty, or conflicting data

→ Treat as hypothesis, not insight

This is just an example and we could decide with our teams on the exact intervals. Not all scenarios would need the same confidence either (for example, this post sits at 85% and I’m good with that because it is very conceptual). Where would you draw the line?