Prioritizing AI Accuracy

A lot of people ask a question, get a polished answer, and then move on with AI. A lot of people was also me until recently… If we don’t structure our conversation around truthfulness, then we’re outsourcing our judgment without realizing it.

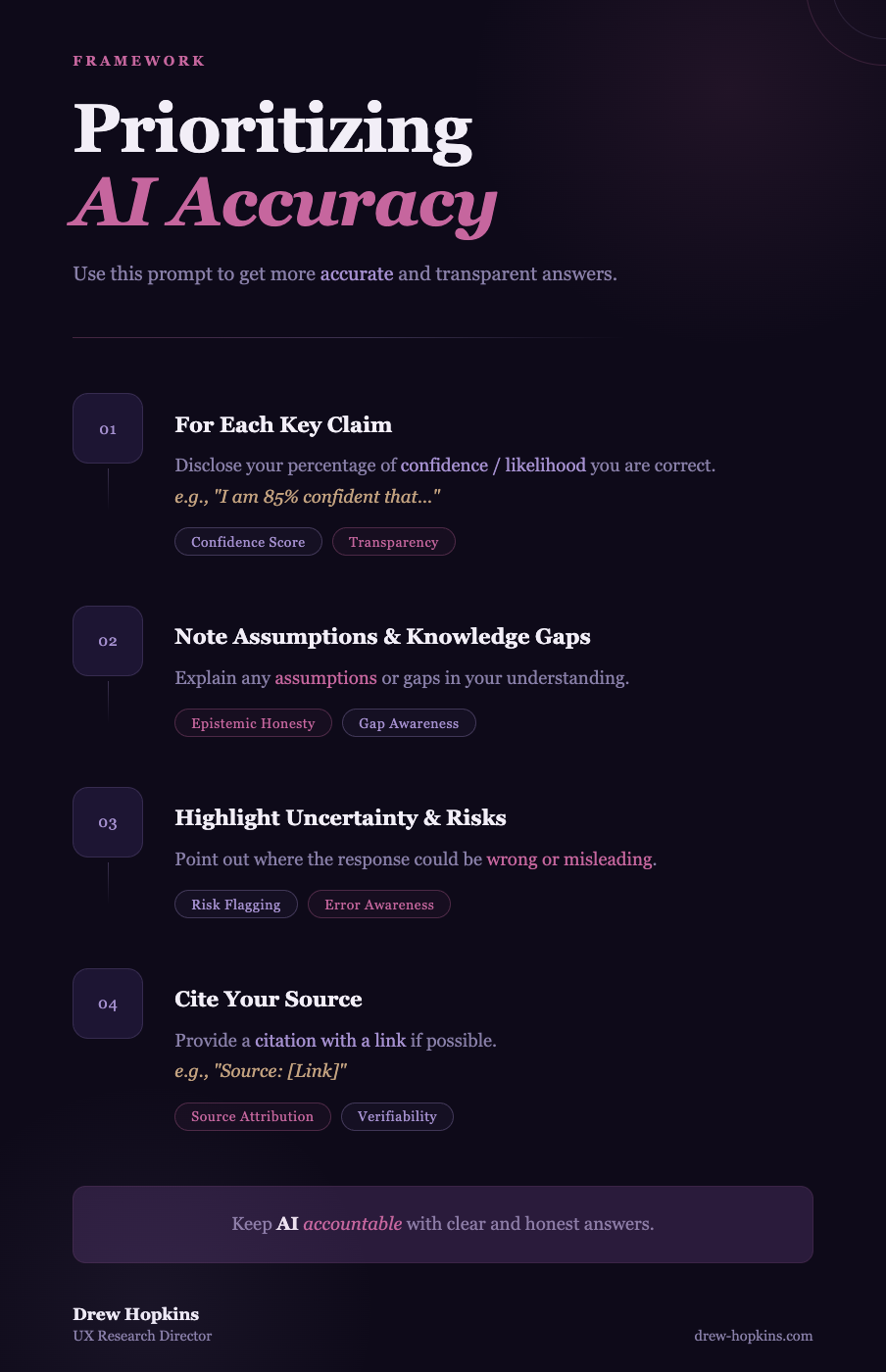

Here’s a quick prompt I started using with AI for less hallucinations and more transparent reasoning —

“For each key claim:

Disclose your percentage of confidence / likelihood your are correct

Note any assumptions or knowledge gaps

Highlight where you could be wrong or misleading

Cite your source (with a link if possible)”

I’m curious about how other folks are thinking about reliability with AI — what’s worked (or failed) for you?

Source:

ChatGPT

Article Weaknesses and Knowledge Gaps:

AI-generated “confidence percentages” can create a false sense of precision—they are not true probabilities.

Models may still hallucinate sources or citations, even when explicitly asked not to, lacks empirical evidence of this.

Prompt could give users the impression that adding structure ensures reliability, when human judgment is still required.

Prompt may not significantly improve outcomes for simple or well-known factual queries.

Supporting Sources:

AI hallucinations and mitigation strategies (Stanford HAI)

https://hai.stanford.edu/news/ai-hallucinations-why-they-happen-and-how-fix-themNIST AI Risk Management Framework (trust, reliability, human oversight)

https://www.nist.gov/itl/ai-risk-management-frameworkPrompt engineering and reliability considerations (OpenAI guidance)

https://platform.openai.com/docs/guides/prompt-engineeringNielsen Norman Group on research rigor and triangulation

https://www.nngroup.com/articles/triangulation-user-research/