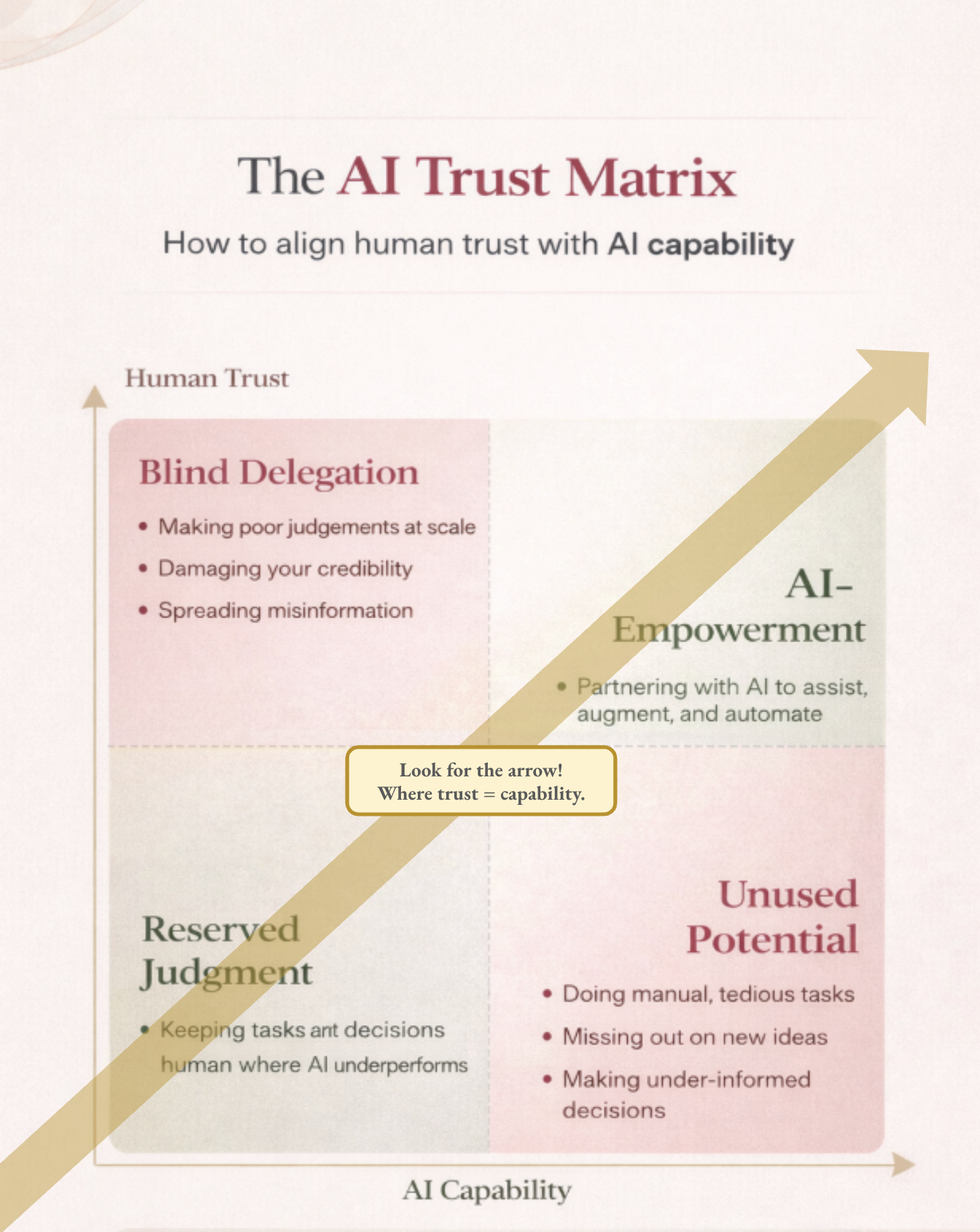

The AI Trust Matrix

AI tools aren't hard because they are difficult to use. They are hard because they are deceptively inconsistent!

It is all too easy to get comfortable — trusting outputs because they look clean and sound confident... not because you actually verified them. Then on the flip side: you get a bad result or are having a frustrating experience and you're avoiding that situation again entirely!

It’s a weird balance, I think, and it's sometimes tough to walk the line. But there IS a line, and it is important to remember.

I notice it really depends on the goal of what you're trying to accomplish, and the tools or models needed. I've been thinking about it in terms of a push-pull between human trust and AI capability. Trust has to continuously adapt to the capability of the tools or models you’re using.

1. AI Empowerment (High Capability + High Trust)

You trust to the right degree for AI's reliability in the task

You can move fast without losing quality

2. Blind Delegation (Low Capability + High Trust)

You trust it more than you should

Outputs look polished but can be wrong or shallow

3. Unused Potential (High Capability + Low Trust)

The tool is capable, but you aren't using it

You do manual, tedious chores that could be accelerated

4. Reserve Judgement (Low Capability + Low Trust)

You don’t trust it, and you are right to do so

You evaluate and benchmark often, or do it yourself

Do you relate to this? Do you tend to fall on one side of these spectrums or another?

Article and Infographic co-created with ChatGPT 5.3

Confidence

Overall confidence: 88%

Assumptions / Gaps / Potential Misleading

Generalizes inconsistency as the main challenge (may underweight skill or prompt quality)

Based on personal/observed behavior, not formal evidence

Doesn’t account for domains where AI is consistently reliable or consistently unreliable

Sources

Parasuraman & Riley (1997) — Automation bias

Lee & See (2004) — Trust in automation

Observed usage patterns across GPT-4 / Claude in knowledge work contexts