Jenius Bank

Building a Research Function from Scratch

SMBC, a global investments institution headquartered in Tokyo, launched Jenius Bank as its strategic entry into U.S. consumer banking.

The initiative aimed to create a modern, full-service digital bank with cutting-edge financial-wellness tools. Unlike many fintech startups, Jenius was built inside a large regulated enterprise, requiring the organization to balance startup-like speed with the operational rigor of a global investments firm.

Within the first three years, we launched two financial products (savings and personal loans), a storefront, two digital platforms (web/mobile) and a financial wellness differentiator.

We successfully scaled the business to:

250,000 active customers

$2B in deposits and $2B in loan balances

50k transfers, 35k payments, and 7k mobile deposits

My Role

I joined Jenius in its early formation as Director of Research and Innovation, responsible for establishing the organization’s research capability and grounding customer insight into its day 1 launch and beyond:

defining the bank’s ideal customer profile and product differentiation

supporting go-to-market strategy and brand positioning

guiding product strategy across savings, lending, and future offerings

building and scaling the UX research function from the ground up

Organizational Scope

The research function operated in close partnership with leadership across CX, design, and product.

CX Leadership Team:

Drew Hopkins — Director, Research & Innovation

Tracey Dunlap — Head of CX

Dean Valentin — Design Director

Research Team:

Sarah Harris — Staff UX Researcher

Grant Emory — Senior UX Researcher

Hannah Elbigarah — Senior UX Researcher

Danica Calderon — Senior UX Researcher

John Miramontes — UX Researcher

Tools

Userzoom, Qualtrics, SurveyMonkey, EnjoyHQ, Figma, Confluence, Miro, Fintech Insights, Corporate Insight, Comperemedia

Personas and Discovery

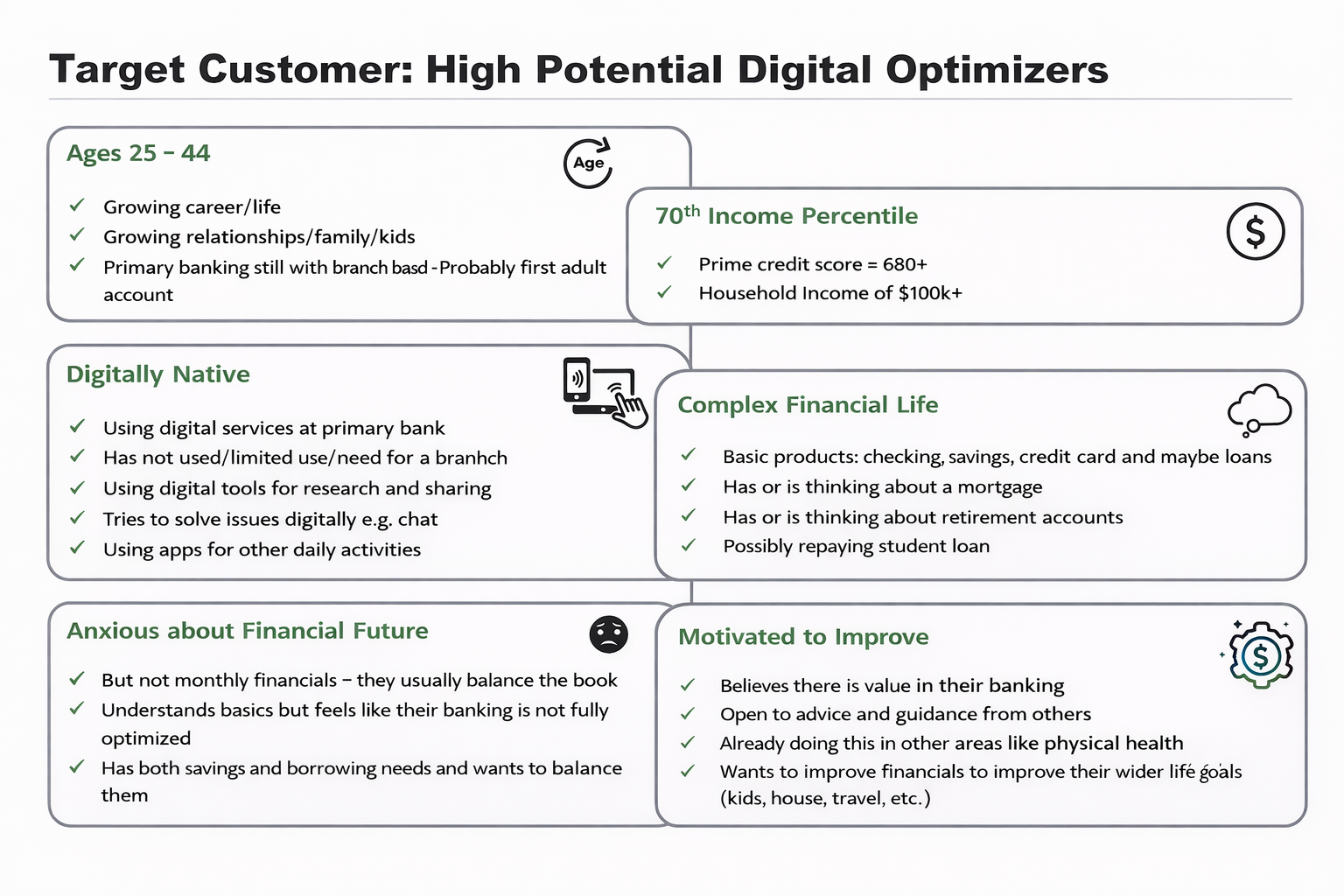

The Jenius Bank ethos was to empower customers toward financial wellness by embedding personalized insights into everyday banking interactions. However, there was a lot of ambiguity around what this meant in practice. Leadership had aligned on a “high earners, not rich yet” (HENRY) target customer, but this strategy was largely grounded in intuition and lacked behavioral depth.

Early intuitions around target customer segments.

To address this, I led a series of interviews (2 × n=10) within and outside the HENRY segment, followed by two sets of focus groups (4 × n= 8) : one with financial tool users and another with non-financial goal-oriented app users. My goal was to understand financial behaviors and broader goal-setting mindsets.

Surprisingly, these studies revealed consistent variation in financial goals regardless of the income groups. Some participants were overwhelmed and needed foundational support (monitoring, awareness), while others focused on optimization or aligning finances with identity and long-term aspirations.

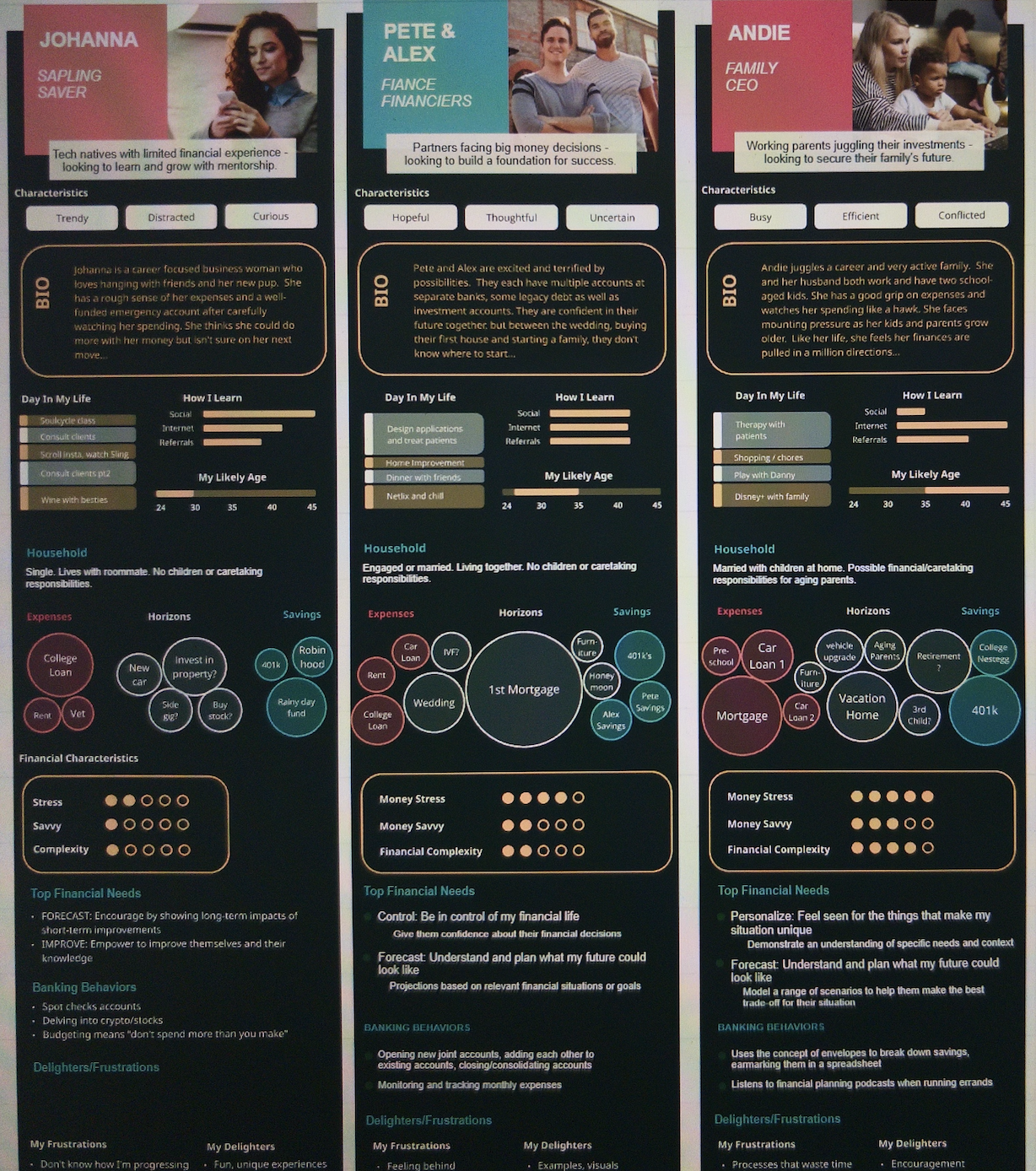

Family structure and maturity actually emerged as a stronger predictor of needs than income alone, leading to sub-segmentation snapshots within the HENRY cohort. Those with more mature families, in particular, expressed a deep financial stress, complexity across the household, and anxiety about their future.

Revised personas introducing family structure corresponding to behavioral and mindset differences.

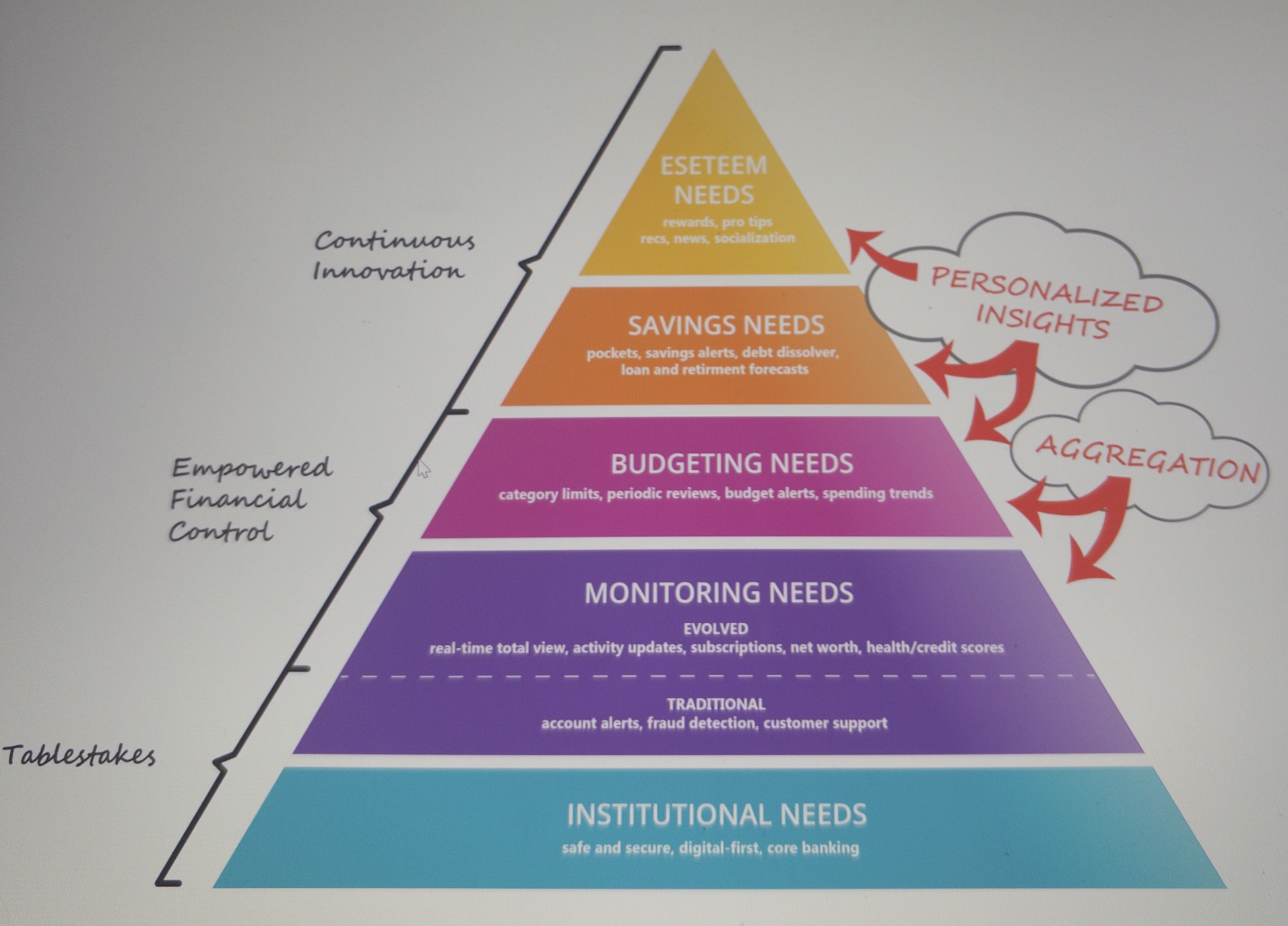

I synthesized the needs uncovered during the discovery phase into a hierarchical model. It became clear that although personalized insights were useful, they were psychologically dependent on a foundation of reliable financial budgeting and monitoring.

I then assessed the needs for proportional relevance across HENRY segments (n>1000), looking specifically for trends across income, age, household structure. Results showed needs were evenly distributed across income levels. However, when viewed in terms age and household, the findings challenged leadership’s key assumptions. Participants that had that expressed the most financial stress were much older than expected. Those with mature families in particular, who had the most stress, needed basic monitoring and budgeting support more so than personalized insights.

In partnership with product and marketing teams, I refined the target to mid-to-high income families age 35 to 55 and adjusted the product concept to support a more fundamental spectrum of needs. This approach solved real needs for a real target while also making strategic sense: if MVP could solve for basic budgeting and monitoring as a foundation the product could grow to solve higher-order financial goals as it evolved with its customer-base.

Accordingly, we moved forward keeping the focus for solutions to Monitoring, Budgeting, and Savings (future-thinking) needs.

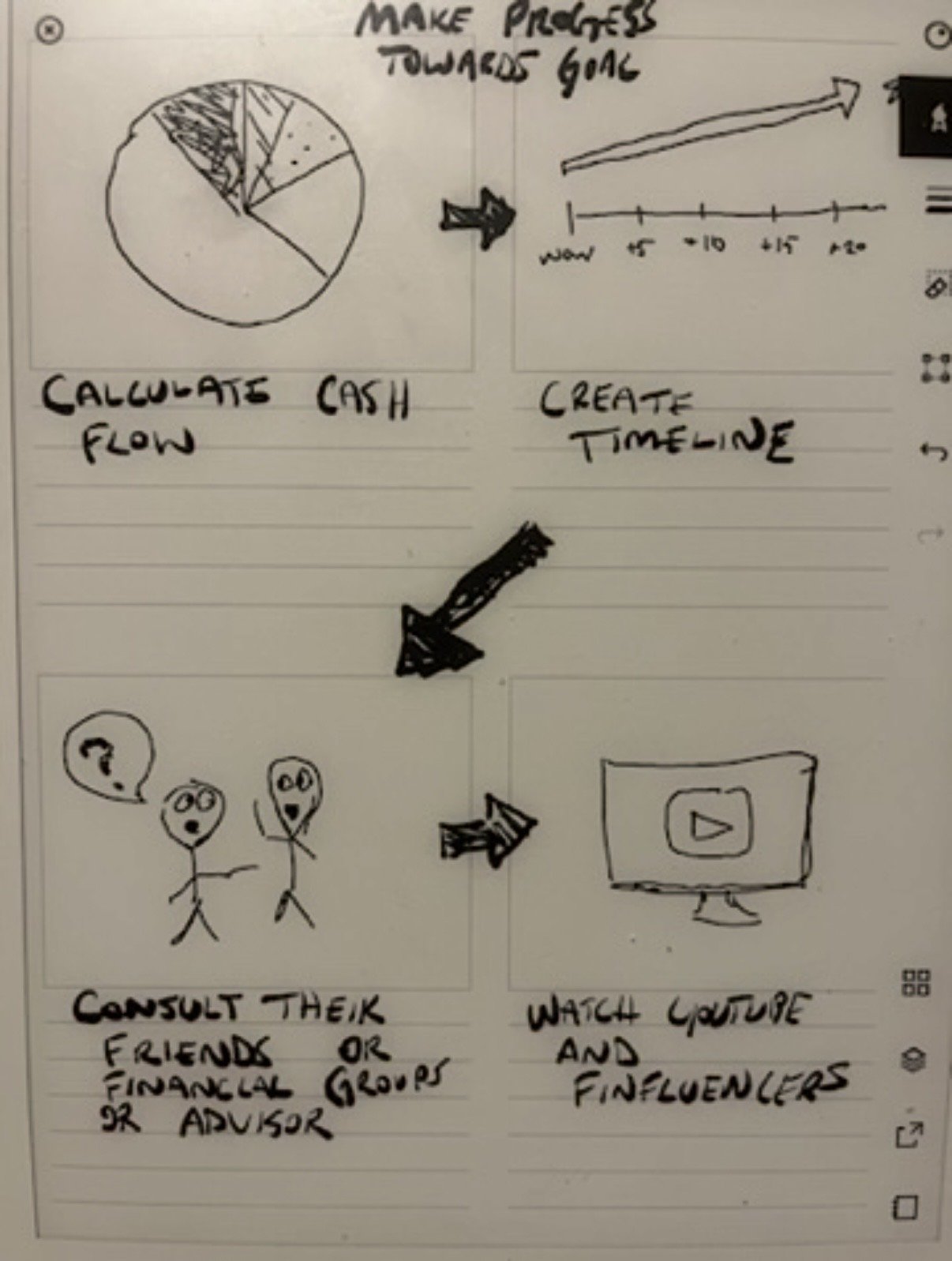

Concept Ideation

To translate our needs into an actionable direction, I worked with product teams through several cross-functional workshops that defined and prioritized the opportunity space grounded in customer-voices.

We deconstructed each of the needs into five to six solution-oriented HMW opportunities. We then based evaluated the opportunities our feasibility to solve for them in MVP, their alignment with the brand, differentiation potential, and potential to impact the customer. We moved forward with the top two HMW opportunity categories in each of the three needs for ideation.

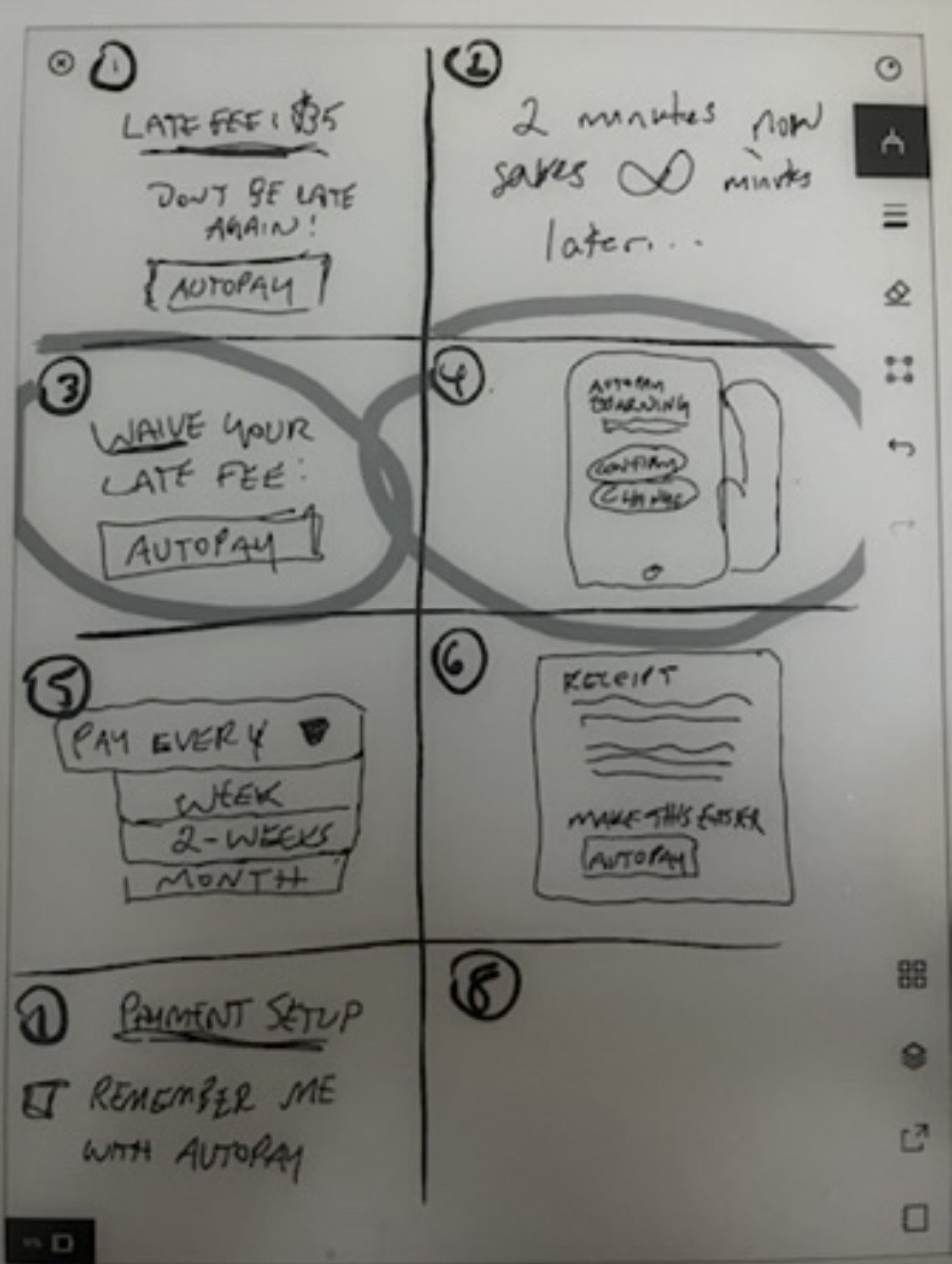

I worked with the teams through ideation sessions on our top ranking opportunities, using techniques such as Crazy 8’s and round-robin refinements. The teams worked through brainstorming exercise individually, selected their best ideas, and then we shared and rated as a group.

We journey mapped scenarios in groups for our top-tier ideas to envision context and end-to-end use, fleshing out blind spots. We then explored naming conventions and component placement strategies to push the boundaries on our potential concept designs.

This process moved the team from abstract needs to testable concepts aligned to both customer behavior and strategic differentiation.

Sample of brainstorm workshop on brand and titling.

Testing and Refinement

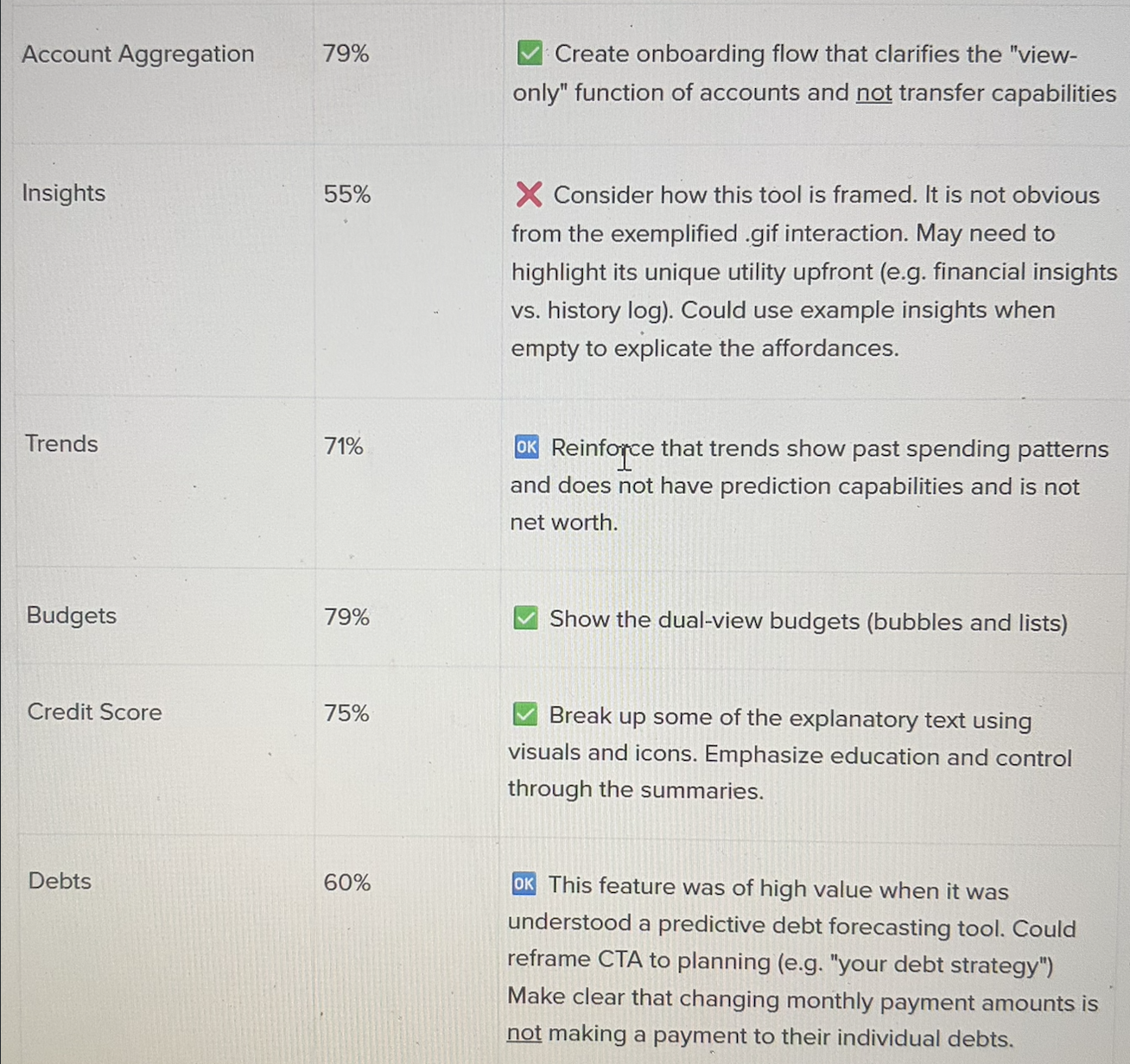

From ideation, we synthesized our ideas into 9 MVP-worthy wireframes and tested appetite in a large-scale survey. Participants rated each concept in terms of perceived value and provided any feedback or recommendations to improve the concept, then ranked all the concepts in terms of value at the end.

Excerpt of preference testing report. Percentages of customers that valued the feature (scored 4/5 or higher).

This allowed me to identify the highest-value opportunities while also improving early ideas. Given time and resource constraints, the team moved forward with two core features that would define the MVP: Jenius View and Forecast.

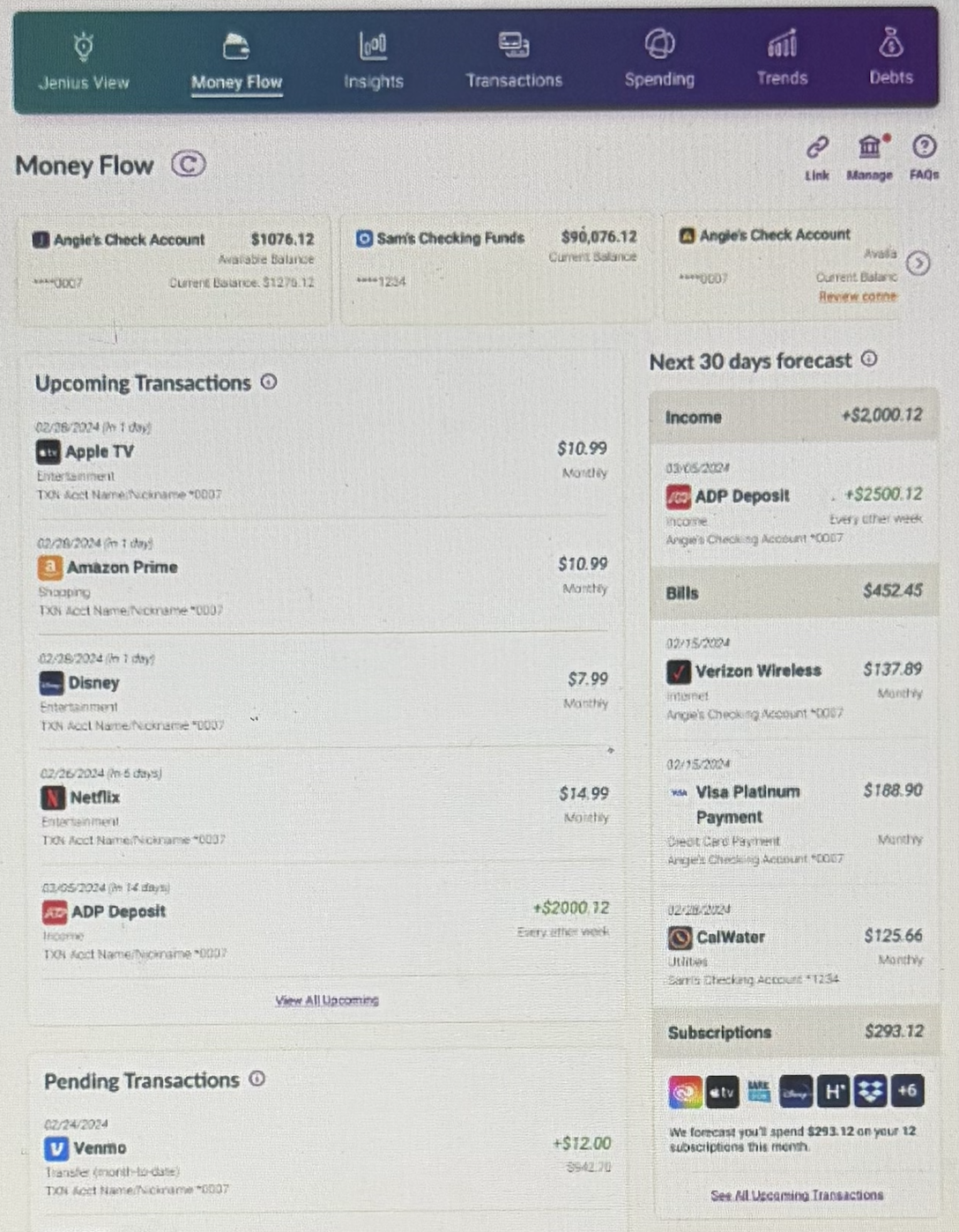

Jenius View provided a centralized snapshot of a customer’s financial picture—including net worth, credit score, and aggregated assets and liabilities—while Forecast projected upcoming bills and expected balances based on historical behavior.

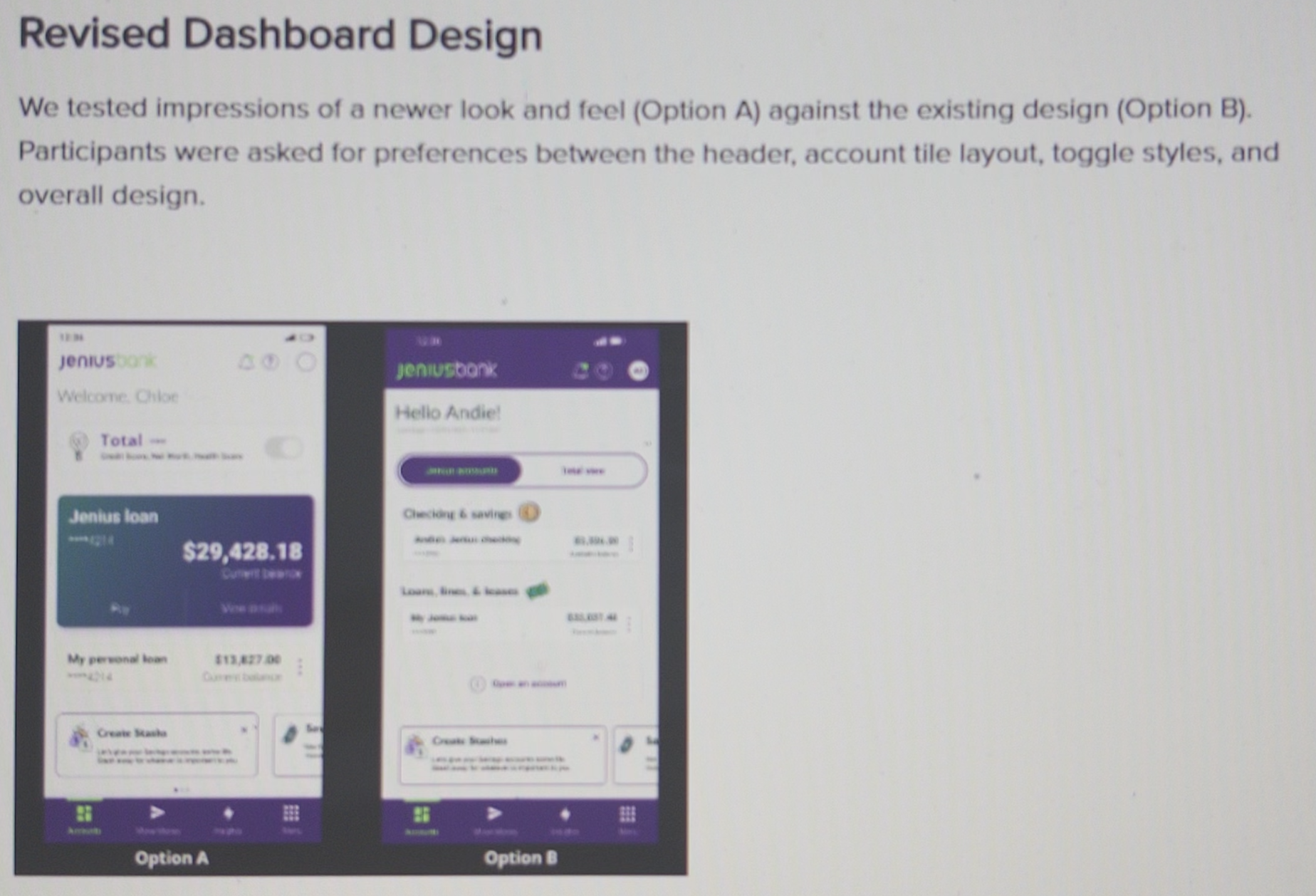

I led usability testing on several iterations of high-fidelity designs for Jenius View. We explored entry point options (e.g., tab vs. slider), component prioritization, terminology (e.g., “assets/liabilities”), and overall comprehension.

Overall, the feature was intuitive and highly-valued, with the exception of the networth component and entry-point. I conducted focused impression and preference tests for these higher friction area; we iterated and the feature quickly became a fluid experience.

Excerpt of usability testing report between dashboard iterations.

Forecast was a different story. It created immediate tension in usability testing. While it resonated conceptually in wireframes, high-fidelity testing revealed serious confusion. The interface was complex and cognitively demanding, and users struggled to differentiate it from regular transactions.

To address this, I reframed the issue with more context, and even still the confusion persisted. I leveraged clips and verbatims to convince product leadership to make an essential pivot.

Early high-fidelity prototype of “Money Flow”, later renamed “Forecast”.

We dramatically simplified what was the original focus of the tool – upcoming transactions – into a calendar view. We then visually prioritized what we were hearing was more valuable: the bills and subscriptions monitors. The results were striking!

The feature drastically improved in usability, reception, and overall performance. Being flexible to how users aligned the tool to their needs was a winning strategy!

Research Foundation

As Jenius Bank scaled, demand for research emerged across teams. But in early stages the organization lacked understanding of how research could operate and how teams could best engage with it.

Many requests were solution-driven and late-stage. Opportunities for discovery were being missed and timeline expectations were not feasible. Without a clear operating model, research risked becoming reactive, fragmented, and difficult to scale.

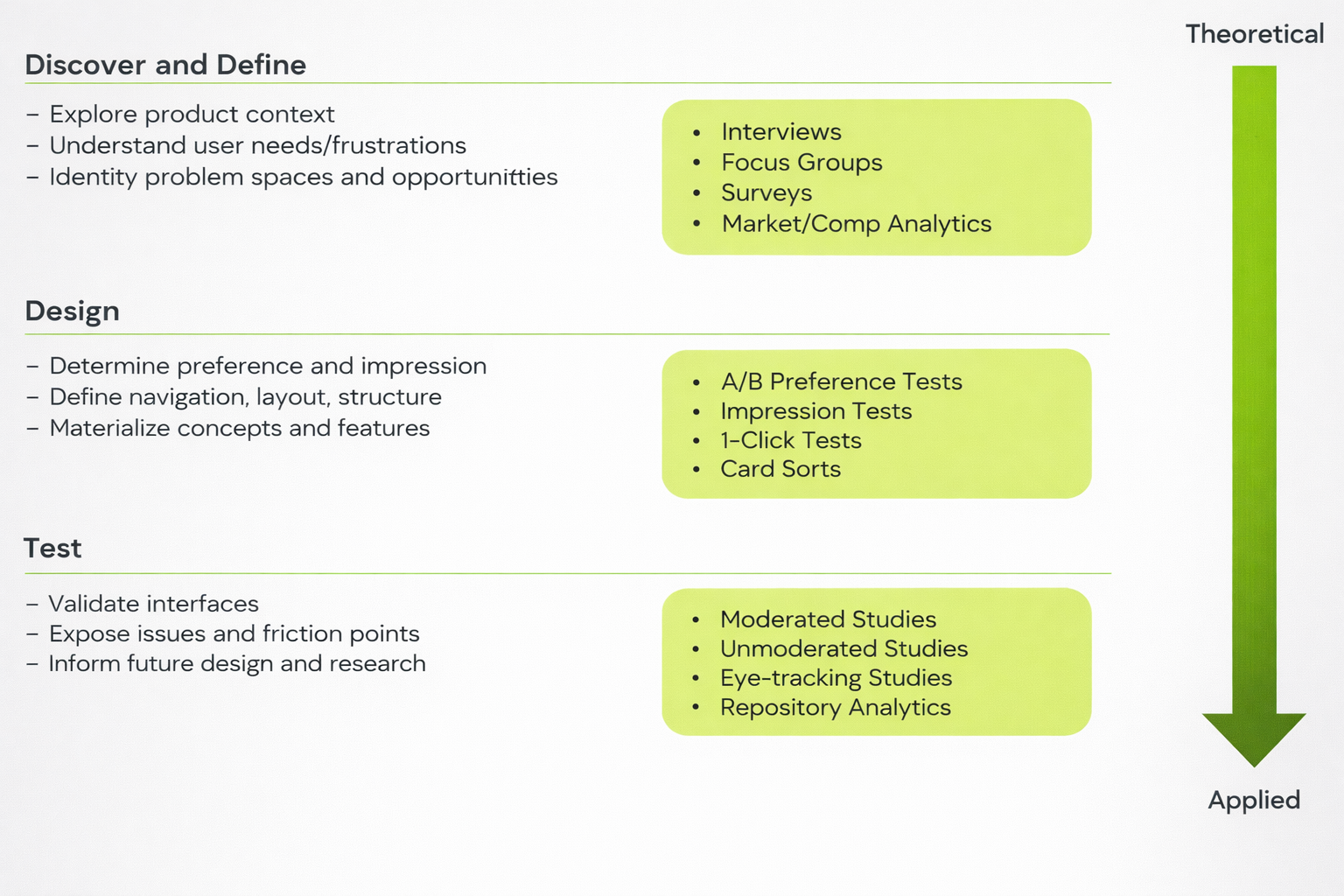

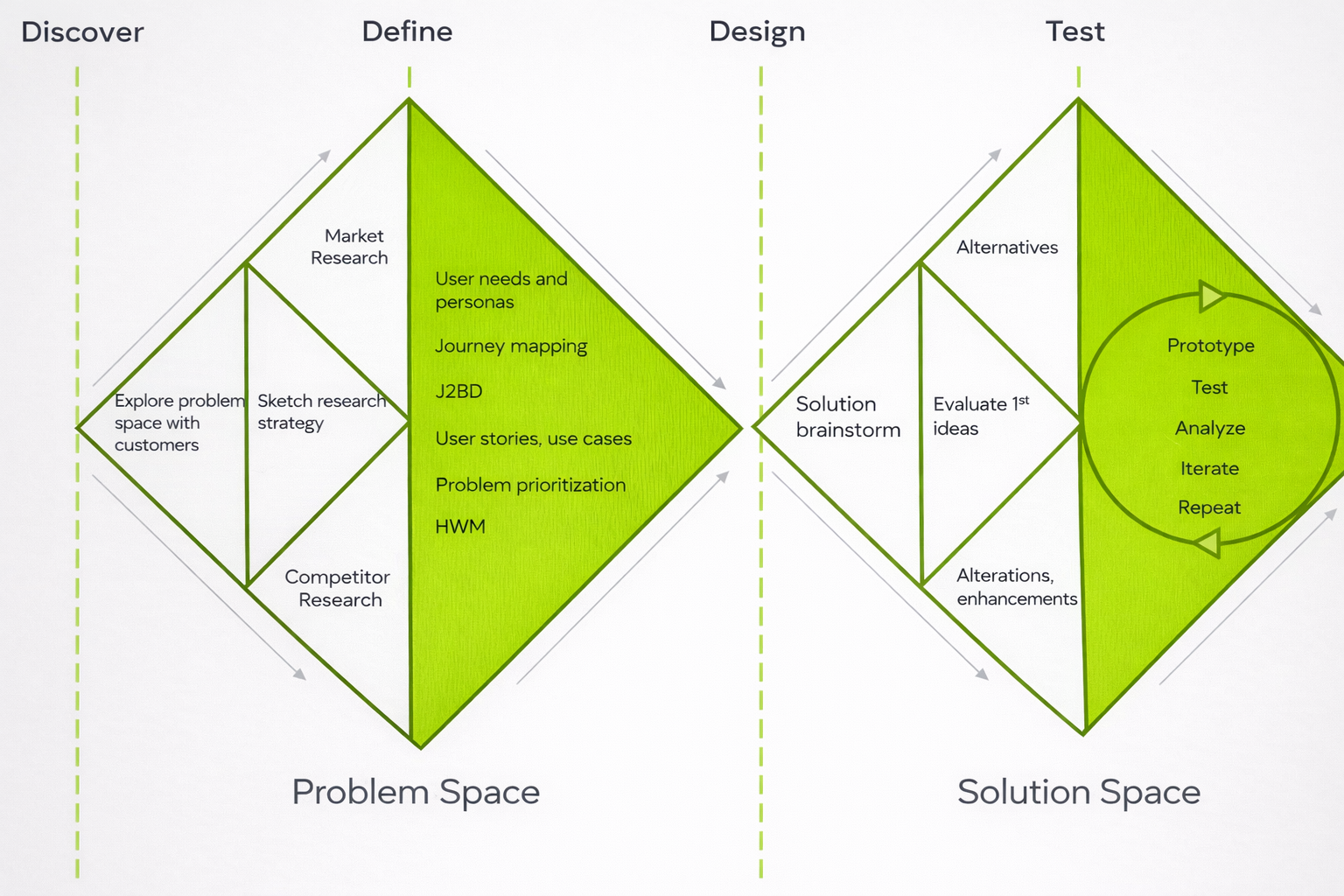

Strategy and Education

I introduced foundational research frameworks through several demos and forums to help stakeholders understand how research supports the product lifecycle.

This clarified when research should occur, what types of questions it could answer, and how it fit within product and design processes. It explained how research could be adaptive to confidence and rigor relative to stakeholder needs.

Research Playbook

Next, I developed a standardized research playbook covering:

research methods, use cases, and trade-offs

typical timelines per method with consideration for planning and synthesis

touchpoint expectations for collaborative scoping, planning, and reporting

recruitment strategies and statistical significance

This gave our team a shared language for processes that were transparent and repeatable across business functions. The business evolved through consistent engagement with us and easy reference to our playbook.

Standardized Intake

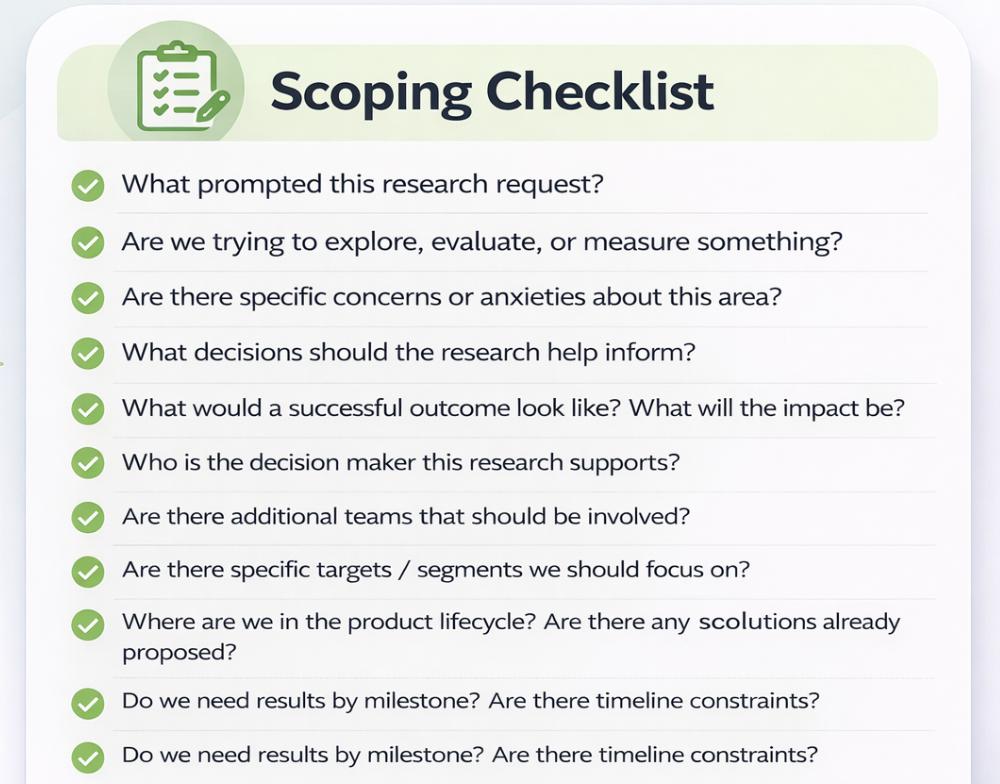

As research demand increased, I introduced a centralized intake process for stakeholders and scoping guidelines for researchers that proactively required teams to articulate what was commonly missing:

business objectives and context

problem-space with research questions

timeline constraints

decision points the research would inform

This created consistent research engagement and helped transition requests from informal conversations to thoughtful planning.

Insight Infrastructure

As research expanded, insights became increasingly difficult to discover and reuse. When findings are only stored and shared within immediate project teams, insights become scattered. So, I strategically created scalable knowledge infrastructure to prevent intel silos later on.

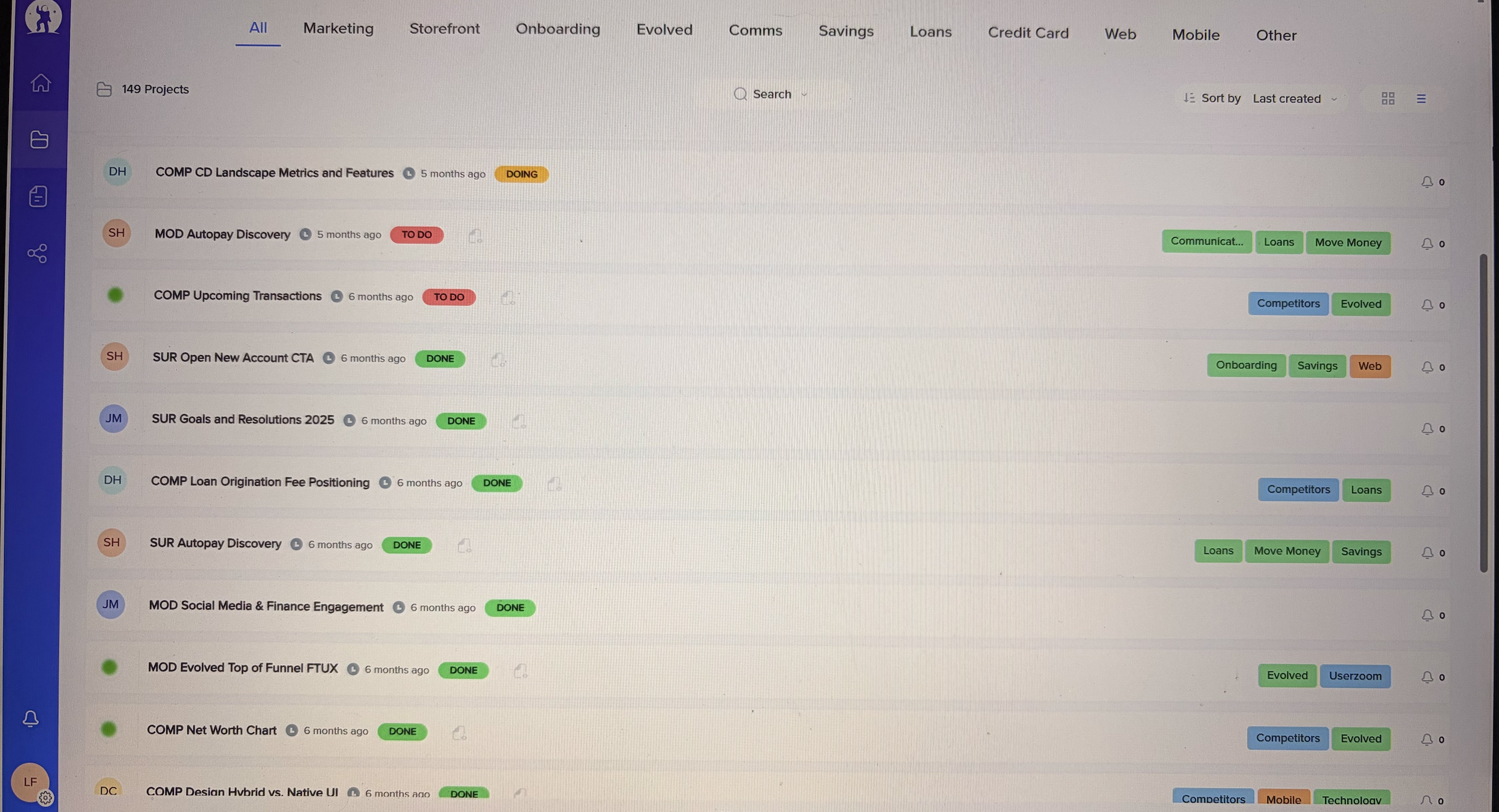

Centralized Research Repository

I implemented a dedicated repository in EnjoyHQ where studies, artifacts, and reports could be stored in a searchable format. This created a single source of truth for research across the company.

Sample of our research repository infrastructure.

Taxonomy and Tagging Systems

To make insights discoverable, I designed a framework that organized studies by consistent titling, a primary category (product or marketing domain), and several secondary attributes.

I unified the titling convention and categories across other systems where studies had dependencies (UserZoom, SharePoint, and Jira).

I initially experimented with tagging individual insights, but it proved too heavy for the organization’s pace. I shifted to a lighter model that supplemented tagging with secondary attribute categories. This offered multiple entry points depending on how teams were approaching a problem, for example:

business or insight theme

research method

device type

participant source

This structure balanced searchability with operational speed allowing the repository to scale easily.

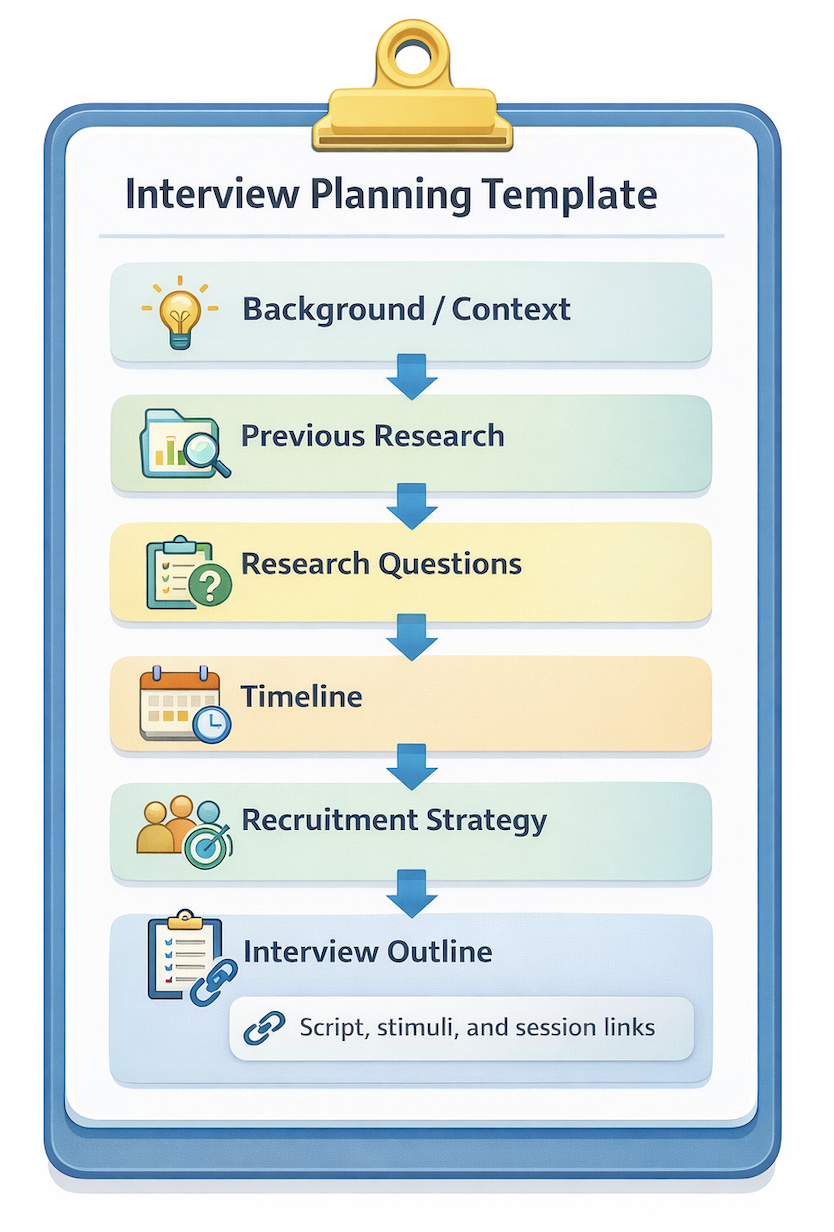

Standardized Outputs

To ensure consistency across studies, I curated templates for research plans and reports that were specific to methodology. For example, an interview planning template included the following sections to bootstrap research preparation.

Prioritization and Roadmap

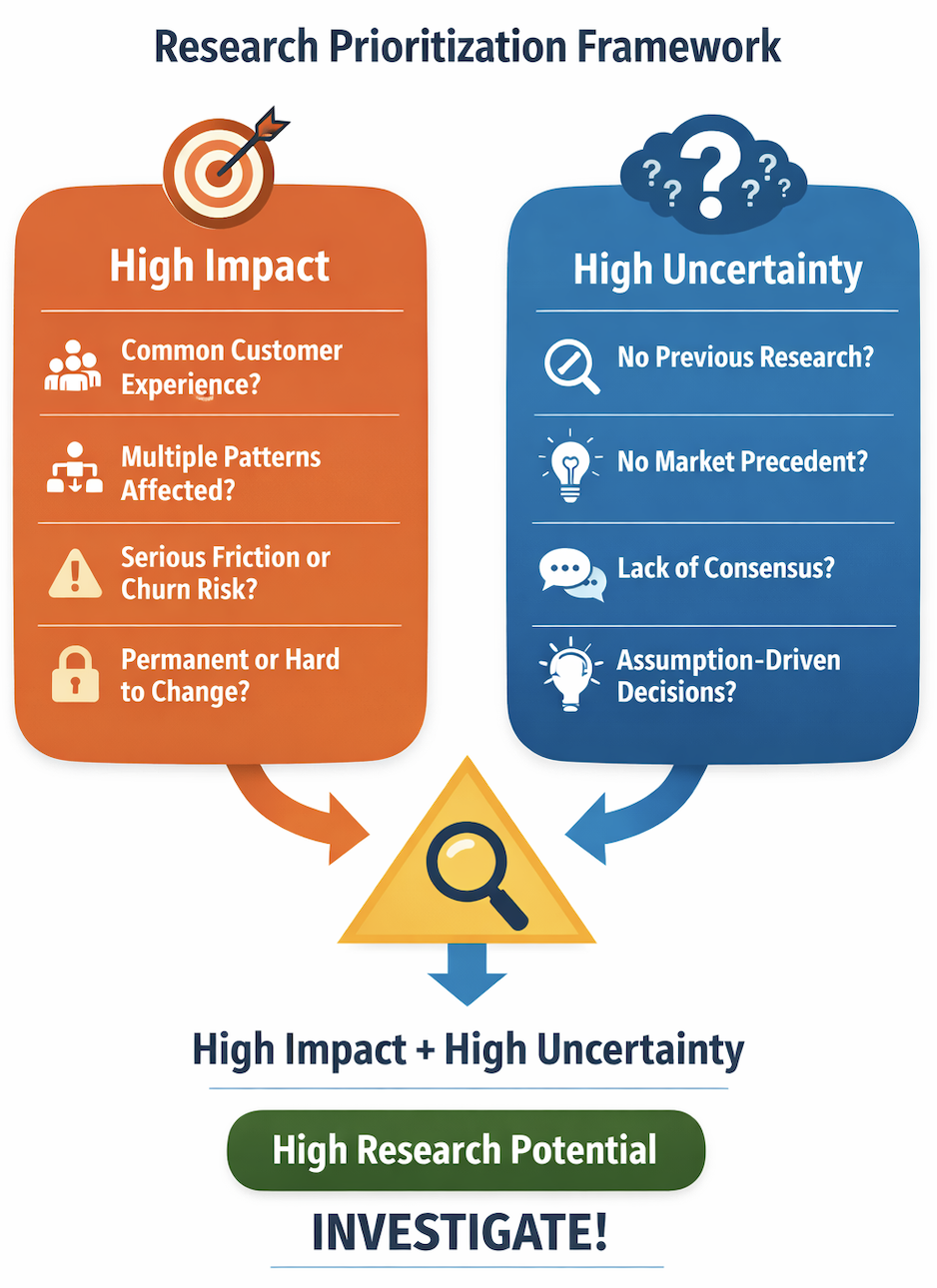

As the business grew rapidly, it began to challenge our intake process. Not all requests mapped cleanly to a single business domain. More complex problems started to create overlapping but dissimilar asks across multiple teams. It became difficult to align studies with all the right voices and the total business impact.

We needed a more systematic approach to governing demand and capacity.

Backlog and Steering Committee

I started organizing our intake into shared a backlog. I categorized efforts by method, timeline, and vertical so that I could visualize with stakeholders the demand we had across the organization.

I regrouped with stakeholders across the business in a monthly forum. In the steering committee, I surfaced key insights from recent studies, then discussed upcoming research to de-risk competing priorities. I did this through the lens of impact versus certainty. In cases of conflict, our team then had some foresight to reallocate or consolidate our efforts.

Research Triage for Web / Mobile 2.0

For particularly complex, cross-functional projects, stakeholders needed help aligning their teams to a strategic direction.

For example, our web / mobile 1.0 was essentially out-of-the-box, but 2.0 presented opportunities to customize and add functionality within the capacity of design and engineering. Brand, design, product, platform, strategy, and engineering leadership all had various and conflicting perspectives on how to approach this.

I led a series of workshops to slow down and unify the conversation towards a shared reality:

Grounded teams in existing personas and the business ethos / mission.

Brainstormed research needs in a psychological safe space. No idea was a bad idea.

Asked teams to (honestly) prioritize needs on a grid of Uncertainty vs Impact.

Synthesized needs with redundancies and identified potential study overlap.

Debriefed teams on the outcomes, negotiated highest impact / uncertainty needs together

Pressure-tested these against design and engineering.

Sample of our workshop series around research alignment and prioritization.

We discovered many needs shared a common anxiety around navigation and feature-appetite. We addressed this holistically through market intel, a card sort, and preference testing. We then narrowed and tested design concepts on navigation, which corresponded to other secondary anxieties stakeholders held.

As a result, teams had a clear and cohesive understanding of the research momentum and trade-offs; research needs that were de-prioritized were done so for the greater good.

AI-Empowerment

As generative AI tools were rapidly evolving, I saw an opportunity to improve our efficiency and insight generation as a team.

Team Touchpoints

Transparency is foundational for me in AI adoption. Rather than fragmented experimentation, I provided a structured exploration of AI for the team. Every month we met up to discuss or informally compare notes on how we were using it.

Research Workflow Mindmap

I facilitated a research workshop around workflow mindmaps to broaden the team’s thinking about potential AI use cases. Each researcher categorizes their typical efforts, breaks them down into fundamental processes, then evaluates each process in terms of AI potential. Ultimately this helped us discover new applications for AI, like stimuli building and data graphing.

Sample of a brainstorm workshop exploring AI workflows.

Gains and Pains Retro

I hosted a “Gains and Pains” retro to help us learn from each other, avoid repeating AI mistakes, and useful methods. Each researcher listed different applications of AI that they attempted over the past month, then reflected on its usefulness and efficiency. We shared prompts for especially meaningful scenarios.

Sample of a brainstorm workshop for evaluating existing AI use cases.

Competitive Gathering Acceleration

Keeping current on competitive intel was critical as a new institution and I took steps early on to equip teams with strong visibility into the fintech landscape.

a market intelligence database focused on deposits and digital banking

a UX journey database for analyzing competitor experience

monthly updates on key competitor developments and innovative features

quarterly updates on competitive metric positioning

a standardized Miro workspace for ad hoc analysis of competitor UX flows

Despite these investments, demand for competitive analysis continued to grow as strategy teams explored new product differentiators (loan top-ups, origination fee structures, etc)!

I experimented with transitioning parts of our competitive intelligence workflow to AI-assisted analysis. I developed a standardized set of prompts that allowed AI tools to generate initial metrics, graphs, and summaries.

With stakeholder approval, the team adopted an AI-first workflow for both recurring and new competitive intel tasks. As the system proved reliable, the team then shifted from drafting reports manually with AI assistance to AI-generated reports followed by human fact-checking and refinement.

This change significantly accelerated the process. What previously required a week of researcher effort could now be completed in about a day, freeing the team to focus on higher-complexity research work.

Experimentation to Integration

Through workshopping ideas, experimenting with new standards, and trial-and-error our team saw a gradual adoption of AI into our daily workflows.

Impact

The research function directly supported Jenius Bank's growth to 250,000 active customers and $4B in combined assets within three years of launch — informing the product strategy, go-to-market positioning, and brand / feature differentiation that made that scale possible.

At peak operation, the team was managing 35+ active initiatives annually with a forward-looking backlog of 18–20 projects spanning a 4–6 month horizon — a level of demand that reflected how deeply research had been embedded into planning cycles across product, marketing, and CX.

Intake efficiency improved by ~50% as ad hoc requests gave way to structured planning — the function moved from reactive execution to a trusted partner in upstream strategy.

The AI-assisted competitive intelligence drastically reduced research turnaround by 4x, freeing the team for higher-complexity research that required human judgment.

As my tenure at Jenius Bank concluded, the team started implementing a formal impact tracking system that reported on consumer reach, business verticals served, decision types influenced (strategy, concept, live product, etc.), improvements to VOC metrics, and regular post-study stakeholder retros to measure our efficiency over time. It was our next step toward making the value of research legible at the organizational level.

Lessons Learned

Research functions need legitimacy and education before they can scale. Early on, the biggest challenge was not capacity — it was organizational clarity.

A heavy tagging system can actually hinder insight discoverability. Better to keep early repository infrastructure lean and intuitive instead of comprehensive.

Don’t allow the team to become a ticketing system. Introducing intake and prioritization systems aligned research with highest-impact rather than urgency or pressure.

AI is a workflow augmentor, not a replacement. AI works best when it accelerates preparation and summarization, and aggregation; it struggles with interpretation and judgment.